Quite possibly the last blog of the year, and want to wish everyone a Happy and Productive 2011. Well, what a year it has been. I should probably do a 2010-Retrospective at a later post, but for now, I want to stress out the importance of unit testing.

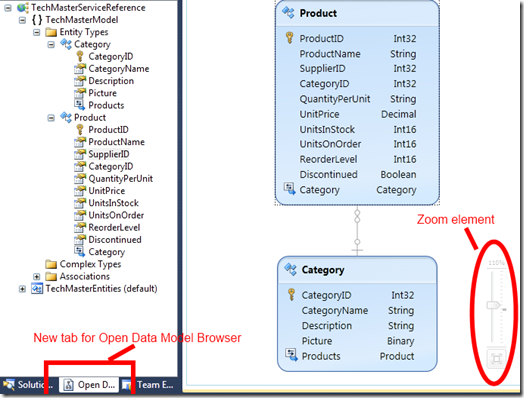

Currently with my team, we are working on a partner integration project where we call a few web services to initiate a process, and in return expose a few services (URI’s) to receive process results. We are using WCF, C#, .NET 3.5, Linq-to-SQL, and SQL Server as the backend.

Although a few months into design, specification and development of the project, we have made decent progress. There are two separate projects used for testing the code base. The code has been factored out into three separate projects, Services – Business and Data Access, which is quite typical for .NET enterprise type projects.

Not sure if you belong to the camp of testing your code, or you rather use the FDD method (Faith-Driven-Development : “I believe my code will work”). This is plucked from a relatively recent tweet by @lazycoder, but I think he also retweeted. You have to look into it to see who originally coined the term. But FDD is a practice which one can easily follow especially when you are time constrained and just want to call “I am done”. I hope you belong to the TDD camp (Test Driven Development).

So, why unit test? I have seen and read many blogs about this, but rather share with you my opinion why and how it works for me. First, and foremost, it makes me to “think” about my code and what I want to deliver. You have one piece of feature you want to develop and develop well. Once you test and worked out the feature-set, you move to the next.

It makes you think writing code that is decoupled from each other. Ask yourself. How many times do you “new up” an object in your method? Can you do dependency injection? By the way, there is a great book (currently in MEAP) by Mark Seeman which I have reading along, called Dependency Injection .NET. I suggest you check it out. Anytime you create an instance of an object in your method, you are creating coupling. This could cause some pain when those instances are not available and you decide not to unit test. But you already know that right?

Majority of the time, you get to see and test for the edge cases even though they could be overlooked. So you can easily fake/stub/mock an object and create the test case for those edge cases. You can plan and execute exceptions in isolation. Don’t let the user/interface find out what you are about to do.

Increased Decoupling

Can’t say more. Do you want decoupled code? Better design? You should unit test. Refactoring, what an easy thing to do! Because unit testing allows you to focus on one thing at a time. Not the three-four levels up or down.

In short (and I want to keep it short), if you are writing enterprise applications, I see no way around doing it without unit tests (plus integration tests). Oh, before I leave, please check out Resharper which is a great plugin for Visual Studio which especially helps with the process plus many other enhancements.

Thanks for reading and Happy New Year!

Baskin